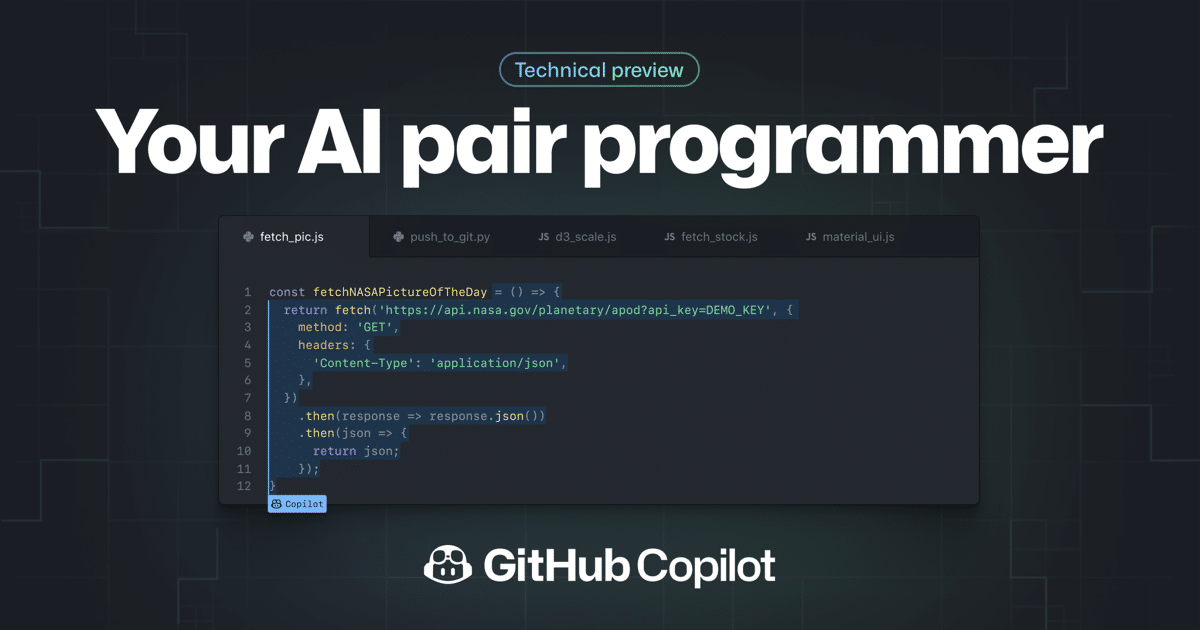

Some days ago we share here on the blog the news of Copilot, which is an artificial intelligence wizard for writing GitHub code and which I basically present as a help tool for programmers.

Even though Copilot differs from code completion systems traditional for the ability to form quite complex code blocks, up to ready-to-use functions synthesized taking into account the current context. As Copilot is an AI function that has learned through several million lines of code and it recognizes what you are planning based on the definition of a function, etc.

While Copilot represents a great time saver due to its learning of millions of lines of code, which has started to raise fears that the tool could circumvent open source licensing requirements and violate copyright laws.

Armin Ronacher, a prominent developer in the open source community, he is one of the developers who got frustrated with the way Copilot was built, as he mentions that he experimented with the tool and posted a screenshot on Twitter in which mentions that it seemed strange to him that Copilot, an artificial intelligence tool that is commercialized, could produce copyrighted code.

Given this, some developers began to be alarmed for the use of public code to train the artificial intelligence of the tool. One concern is that if Copilot reproduces large enough chunks of existing code, it could infringe copyright or launder open source code for commercial use without the proper license (basically a double-edged sword).

I don't want to say anything but that's not the right license Mr Copilot. pic.twitter.com/hs8JRVQ7xJ

- Armin Ronacher (@mitsuhiko) July 2, 2021

In addition, it was shown that the tool can also include personal information published by the developers and in one case, replicated the widely quoted code from the 1999 PC game Quake III Arena, including comments from developer John Carmack.

Cole Garry, a Github spokesperson, declined to comment and was content to refer to the company's existing FAQ on the Copilot website, which acknowledges that the tool can produce text snippets from your training data.

This happens about 0.1% of the time, according to GitHub, usually when users don't provide enough context around their requests or when the problem has a trivial solution.

“We are in the process of implementing an origin tracking system to detect the rare instances of code repeating in all training data, to help you make good decisions in real time. Regarding GitHub Copilot suggestions, ”says the company's FAQ.

Meanwhile, GitHub CEO Nat Friedman argued that training machine learning systems on public data is a legitimate use, while acknowledging that "intellectual property and artificial intelligence will be the subject of an interesting political discussion." in which the company will actively participate.

In one of his tweets, he wrote:

“GitHub Copilot was, by its own admission, built on mountains of GPL code, so I'm not sure how this is not a form of money laundering. Open source code in commercial works. The phrase "does not usually reproduce the exact pieces" is not very satisfactory ".

“Copyright doesn't just cover copy and paste; covers derivative works. GitHub Copilot was built on open source code and the sum total of everything you know is taken from that code. There is no possible interpretation of the term 'derived' that does not include this, 'he wrote. “The older generation of AI was trained in public texts and photos, on which it is more difficult to claim copyrights, but this one is taken from great works with very explicit licenses tested by the courts, so I look forward to the inevitable / collective / massive actions on this ”.

Finally, we have to wait for the actions that GitHub will take to modify the way Copilot is trained, since in the end sooner or later the way it generates the code can put more than one developer in trouble.